Wednesday, June 16, 2010

SILENT STARS...

Classifying 3D warehouse Evaluations

Enrico Dalbosco (Arrigo Silva) - Padua, June 2010

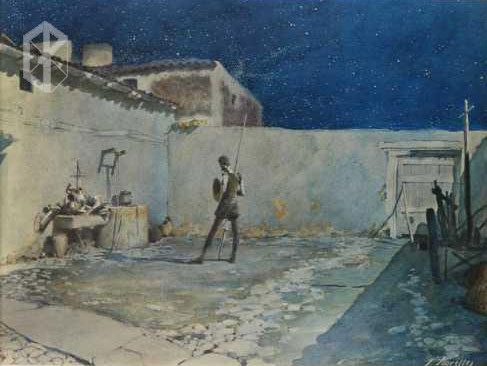

What do the stars say

What do the stars say

to Don Quixote during

his Vigil Of Arms?

What do they say

to All The People

throughout infinite nocturnal sky??

What did the stars say

to Poets Wizards

Navigators Astrologers

Scientists Astronomers

Night Owls

Nightingales Drunkards???

Who knows…

Often, alas, they stay quietly silent.

...

I tried to make this classification in a strictly objective, without questioning the sincerity of assessments or the merits of the evaluator intentions or psychology etc. but only basing on the analysis of words and phrases actually present in 3D warehouse evaluations.

Some other topics that might be explored: relationship between evaluations and so called 3D warehouse rating; classification of users based on their evaluations; cluster analysis on user cross-evaluations.

The following table illustrates the main categories of evaluations present in 3D Warehouse. On each row I have brought a separate category, identified by a code (which I will use later) and characterized on the number of stars (5, 1 to 4, or 0 stars) and the type of evaluation.

Let me now express some thoughts that I have gained about the various types of assessment.

A-POSITIVE EVALUATIONS

The more frequent evaluations are those of category A-Silent and A-Generic that do not bring any contribution to a critical analysis of the contents of the model. The evaluations A-Gdeneric often denote a marked laudatory attitude without helping us to understand on wich strength of the model it is based (I would call this sub-category as... G-Wow!).

Less frequent but much more interesting are A-Specific (positive) evaluations, and even less frequent A-Specific (mixed) evaluations, that instead help the model author (and all the readers, thus including all 3D warehouse modelers) to understand what are the aspects considered more (or less) valid by the evaluator.

B-NON POSITIVE EVALUATIONS

I have classified as type B all evaluations form 1 to 4 stars involving a partially or completely non positive assessment of the model by the assessor.

Much frequent are the B-Silent and B-Generic evaluations that do not bring any contribution to a critical analysis of the contents of the model, unlike those B-Specific (negative) that can help us understand why the assessment is not positive.

A special consideration deserve B-Grimace evaluations because they contain a contradiction in terms... Here I do not want to explain my personal judgement, and leave to the acuity and the perspicacity of the readers draw their own conclusions.

C-NULL EVALUATIONS

This types of evaluations are relatively infrequent. In some cases they depend on evaluator mistake, in othe they are messages (less or more undestandable) that the evaluator wants to send to model Author or to the 3D warehouse colleagues…

.

Enrico Dalbosco (Arrigo Silva) - Padua, June 2010

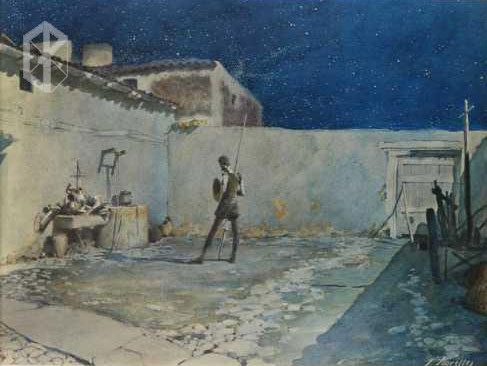

What do the stars say

What do the stars sayto Don Quixote during

his Vigil Of Arms?

What do they say

to All The People

throughout infinite nocturnal sky??

What did the stars say

to Poets Wizards

Navigators Astrologers

Scientists Astronomers

Night Owls

Nightingales Drunkards???

Who knows…

Often, alas, they stay quietly silent.

...

I tried to make this classification in a strictly objective, without questioning the sincerity of assessments or the merits of the evaluator intentions or psychology etc. but only basing on the analysis of words and phrases actually present in 3D warehouse evaluations.

Some other topics that might be explored: relationship between evaluations and so called 3D warehouse rating; classification of users based on their evaluations; cluster analysis on user cross-evaluations.

CATEGORIES OF EVALUATION IN 3D WAREHOUSE

The following table illustrates the main categories of evaluations present in 3D Warehouse. On each row I have brought a separate category, identified by a code (which I will use later) and characterized on the number of stars (5, 1 to 4, or 0 stars) and the type of evaluation.

| Category | Item | Rating | Review Typical Content - An Example | |

| A-Silent |  |  | no words -- | |

| A-Generic [A-Wow] |  |  | not specific [too generic / too laudatory / others] Great! / The best of the best! / Vote my models! /See you next week... | |

| A-Specific(pos) |  |  | specific > positive I like these excellent textures because... | |

| A-Specific(mix) |  |  | specific > positive with some criticisms Very well structured and light, even if there are... | |

| B-Silent |  |  | no words -- | |

| B-Generic |  |  | not specific I dislike your model... | |

| B-Grimace |  |  | specific > positive (?) or negative with some appreciations (?) Nice textures... - Bad model with nice textures... | |

| B-Specific(neg) |  |  | specific > negative Poor textures and misaligned... | |

| C-Silent |  | (no stars) | no words -- | |

| C-Generic |  | (no stars) | not specific Visit my site at... / See you next month... | |

| C-Specific(pos) |  | (no stars) | specific > positive I like these excellent textures because... | |

| C-Specific(mix) |  | (no stars) | specific > not positive Poor textures... | |

Let me now express some thoughts that I have gained about the various types of assessment.

A-POSITIVE EVALUATIONS

The more frequent evaluations are those of category A-Silent and A-Generic that do not bring any contribution to a critical analysis of the contents of the model. The evaluations A-Gdeneric often denote a marked laudatory attitude without helping us to understand on wich strength of the model it is based (I would call this sub-category as... G-Wow!).

Less frequent but much more interesting are A-Specific (positive) evaluations, and even less frequent A-Specific (mixed) evaluations, that instead help the model author (and all the readers, thus including all 3D warehouse modelers) to understand what are the aspects considered more (or less) valid by the evaluator.

B-NON POSITIVE EVALUATIONS

I have classified as type B all evaluations form 1 to 4 stars involving a partially or completely non positive assessment of the model by the assessor.

Much frequent are the B-Silent and B-Generic evaluations that do not bring any contribution to a critical analysis of the contents of the model, unlike those B-Specific (negative) that can help us understand why the assessment is not positive.

A special consideration deserve B-Grimace evaluations because they contain a contradiction in terms... Here I do not want to explain my personal judgement, and leave to the acuity and the perspicacity of the readers draw their own conclusions.

C-NULL EVALUATIONS

This types of evaluations are relatively infrequent. In some cases they depend on evaluator mistake, in othe they are messages (less or more undestandable) that the evaluator wants to send to model Author or to the 3D warehouse colleagues…

.

Comments:

<< Home

A little comment about null evaluations: I think you can't give a 'C-Silent' rating, because a warning text appears saying: 'Please enter a rating or review in order to save'.

Anyway, many thanks for share your thoughts and tutorials!

Greetings!

Anyway, many thanks for share your thoughts and tutorials!

Greetings!

<< Home

Post a Comment