Sunday, June 30, 2013

Appendix B1 - Testing a 3D Machine

Some instructive experiments with the Autodesk 123D Generator

Ten photographs of a church on a hill - usual photos, each of 150 KB.

May they be sufficient to automatically create a realistic 3D model using the Photogrammetry's techniques?

This is what we will see in this appendix!

Enrico Dalbosco (Arrigo Silva) - Padova, June 30, 2013

Ten photographs of a church on a hill - usual photos, each of 150 KB.

May they be sufficient to automatically create a realistic 3D model using the Photogrammetry's techniques?

This is what we will see in this appendix!

Enrico Dalbosco (Arrigo Silva) - Padova, June 30, 2013

AUTO-GENERATED 3D MODELS

APPENDIX B1

The Autodesk 123D-Catch Generator Machine

© Enrico Dalbosco (Arrigo Silva) April-June 2013

Last update: June 30, 2013

Index of contents

PART 1 - ENVIRONMENT

PART 2 - A VISUAL CONFRONTATION

PART 3 - CEZANNE'S LESSON

PART 4 - [... work in process ...]

Appendix A - ABOUT ST. JOHN AUTO-GENERATED

Appendix B - THE BIG-3D-GENERATOR MACHINE

Appendix C - Our Old Models Now Very Slim

APPENDIX B1 - THE AUTODSEK 123D-CATCH GENERATOR MACHINE

Following those in Appendix B, in this appendix I present some experiments I carried out on an interesting and intriguing site that provides at our disposal (free of charge) an automatic 3D Models Generator.

I have personally done some tests by sending photos (or shots) and getting in a few minutes the corresponding 3D models, as you can see below.

Some references:

- the site is www.123dapp.com

- the function that we can use to get the models is the 123D-Catch

- to use the features of the site we need to register

- we must provide as input to 123D-Catch from 7 to 70 photographs of an object or scene

- within a few (some) minutes 123D-Catch creates a 3D model viewable and downloadable

- in the site there are thousands of 123D-Catch models created with the users photos

- the site is www.123dapp.com

- the function that we can use to get the models is the 123D-Catch

- to use the features of the site we need to register

- we must provide as input to 123D-Catch from 7 to 70 photographs of an object or scene

- within a few (some) minutes 123D-Catch creates a 3D model viewable and downloadable

- in the site there are thousands of 123D-Catch models created with the users photos

Warning. The tests I did not purport to deal with all the features and all the possibilities of Autodesk 123D-Catch, and are not sufficient to issue a judgment made about this product, that nevertheless seems to me very interesting and innovative - but I think they prove of public interest and allow us to make some stimulating thoughts on the possibilities and limitations of machines for automatic generation of 3D models of objects and also of buildings, blocks of city and whole countries.

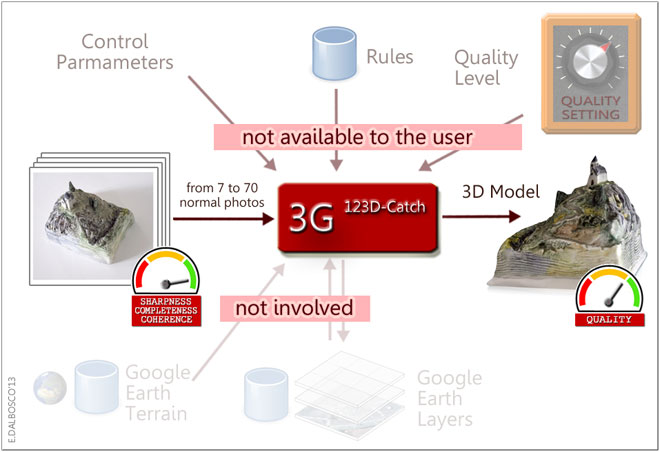

B1-0 THE 3G [123D-Catch] MACHINE

In accordance with Appendix B, here's how we can outline the context in wich

the 3G123D-Catch Machine works:

Some clarifications:

- the user is limited to providing normal photographs taken around the object

- the number of shots can vary from a minimum of 7 to a maximum of 70

- there are no limits binding of focal length, size, quality, and other camera settings

- the number of shots can vary from a minimum of 7 to a maximum of 70

- the shots can be provided in any order

- 123D-Catch tries always to create the corresponding 3D model, although incomplete

- the resulting 3D Model is shown to the users via a proper online feature and can be downloaded as .stl file

- all the control parameters of 123D-Catch are not accessible to the user (to be precise, the user can only set the final quality of the model as "normal" or "high")

- 123D-Catch does not use geo-referenced objects or info related to the territory (consistently with the fact that the photographs are not georeferenced)

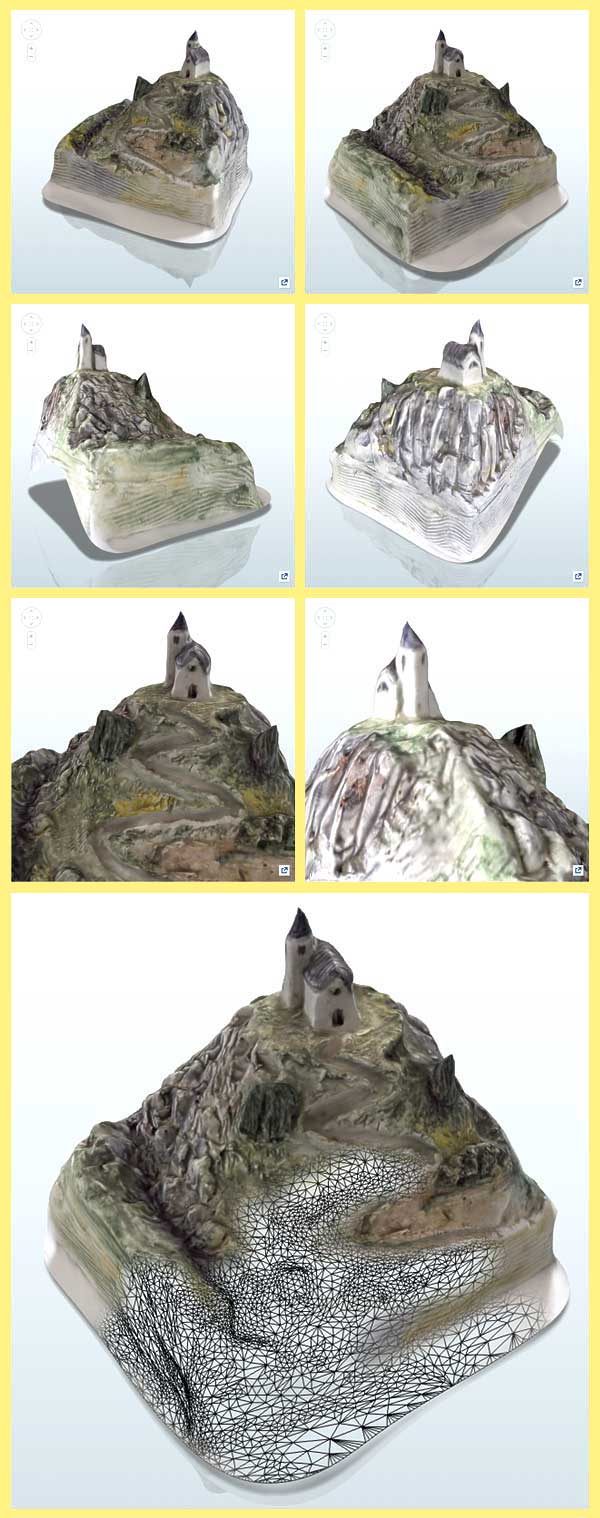

B1-1 TEST #1: A CHURCH ON THE HILL (MY CERAMIC MODEL)

For the first test I used one of my ceramic model that represents a small church on top of a hill - I created this and many other original models in ceramic after attending, with my wife, a ceramics course at Nove Bassano, Italy, in the 1990s; you can see this model in my site at www.enricodalbosco.it.

Revolving around this little model (about 10x9x9 cm) I took exactly 10 photographs as if I was flying around a real landscape with my ultralight airplane.

The original pictures (which you can download from the model on site 123dapp.com) are approx 1000x1000 pixels and each occupies less than 150 KB - the quality is quite good, although some came a bit moved (but I left them on purpose, to see what was going on).

I uploaded these 10 pictures to the 123app.com, I waited a few minutes, I made some other operations to assign a title, description, etc. and to validate the model and I finally got the 3D Model fully auto-generated by the 3G123D-Catch Machine.

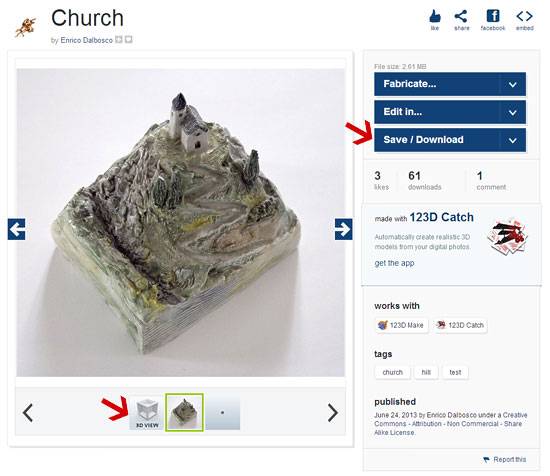

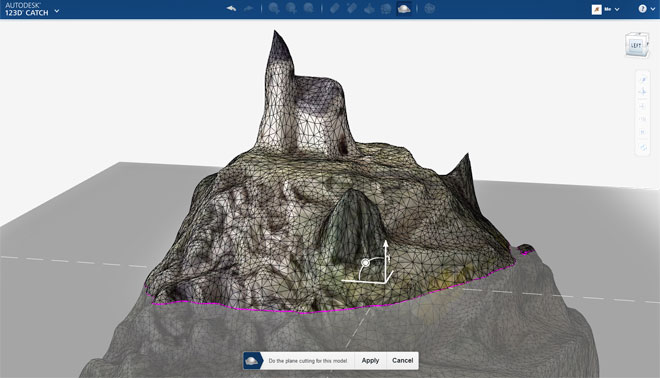

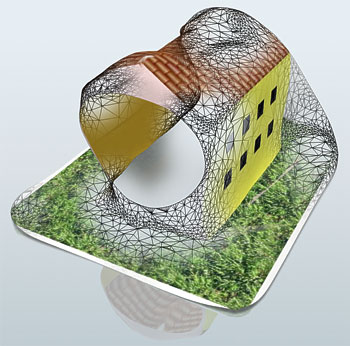

Here is the screen that shows the generated model and allows the 3D visualization (arrow at the bottom left) and download any of the model (arrow at the top right):

And here are some views of self-generated model:

(I have obtained the last view with Photoshop by partially melting a view "Material only" with a "Outlines only")

What do you think?

Some considerations:

- I must confess that I was amazed by the simplicity of the procedure, the speed of the result and also by its quality

- circling the 3D model you may notice some imperfections, more visible from some points of view, especially in the rendering of sharp objects (ag cypress tower)

- in correspondence of the rear base, the model generated has a hole, probably due to the fact that the photographs - at that point - were a bit overexposed

- other imperfections we can note in the point in which the model is connected with the base (a white sheet of paper) on which I had posed it

- the model was created using a dense enough network of triangles (shown in wireframe mode); the size of the triangles is fairly constant

- the generated model weighs 2.6 MB of which 1.15 MB can be (probably) attributed to the textures

B1-2 TEST #2: A SIMPLE BOX (ROCKWELL'S CANDIES)

For the second test I chose one of my SketchUp models particularly simple and well-textured: a box of candy in the shape of a parallelepiped decorated with wonderful paintings of Norman Rockwell, which I used to illustrate my article "Rockwell's Candies, A personal approach to 3D Model evaluation".

For the second test I chose one of my SketchUp models particularly simple and well-textured: a box of candy in the shape of a parallelepiped decorated with wonderful paintings of Norman Rockwell, which I used to illustrate my article "Rockwell's Candies, A personal approach to 3D Model evaluation".Around this model I took 18 shots using the Export>2D Graphic function with Options>Width=1000pixel, Height=768 pixels, Better quality highest.

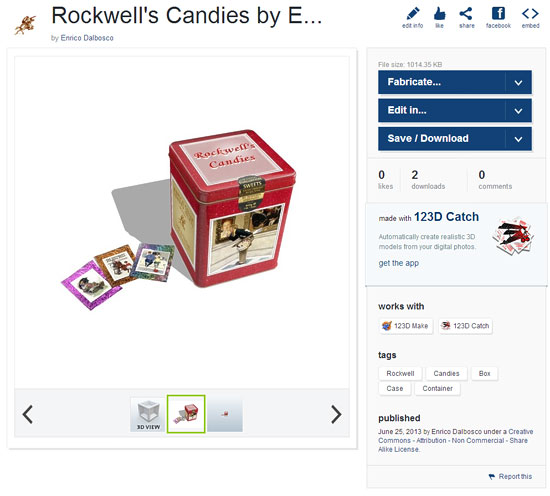

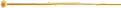

And this is the resulting 3D Model fully auto-generated by the 3G123D-Catch Machine from my 18 photos:

Also in this case the result appears pleasant and of good quality, but we can make some considerations highlighting the differences from Test #1 (A church on the hill):

- all the shots were taken in conditions that can be considered optimal in comparison with what you can get with a camera: it is used the function of Export SketchUp to create a perfect homogeneity of "camera lens" of lights, shadows etc.

- nevertheless also in this model the imperfections we can note low but pretty obvious deformations albeit rounded edges and corners of the box

- in this case there appears no holes in the walls of the box

- we can note slight imperfections in the model base (a white tongue a bit wavy)

- the model weighs only about 1 MB, and is therefore much lighter than the previous though the textures sent to me are much heavier (but perhaps the 3D Machine has not used them all)

- the geometric structure of the model is still made up of a fairly homogeneous grid of triangles that remain, however, enough dense even where they are used to represent a strictly flat wall (in my model all the walls of the box are perfectly plane)

B1-3 TEST #3: A VERY SIMPLE HOUSE (FROM A SKETCHUP MODEL)

For the third test I created with SketchUp a model of house deliberately simple and "strange", with some of the walls without doors or windows and painted with colors perfectly flat.

For the third test I created with SketchUp a model of house deliberately simple and "strange", with some of the walls without doors or windows and painted with colors perfectly flat.

To tell the truth I had initially laid out flat even the roof and the base, but the 3D Machine was not able to process my shots... :( , and so I had to fall on the model partially textured.

Around this model I took 12 shots using the Export>2D Graphic function with Options>Width=1000pixel, Height=768 pixels, Better quality highest.

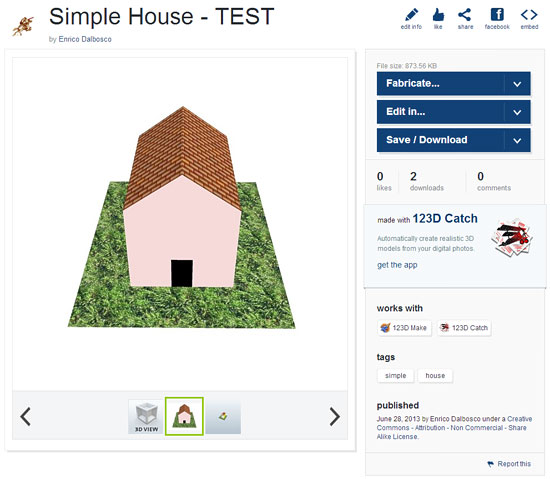

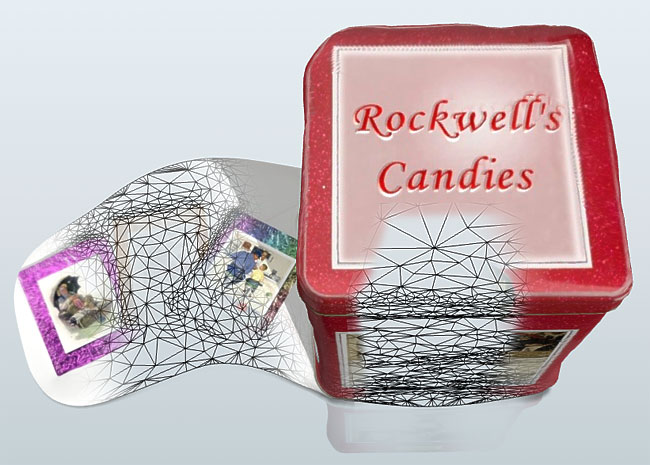

And this is the resulting 3D Model fully auto-generated by the 3G123D-Catch Machine from my 12 photos:

In this case the result is disappointing in comparison to the two previous cases, but you have to think that Catch-123D is a machine that works on photos "real" of real objects and not on shots "fictitious" of 3D models! However, we can make a few considerations, once again highlighting the differences from Test #1 (A church on the hill) and #2 (Rockwell's Candies):

- even if the shots were equatable (as quality etc.) to those of Test #2, the result has serious fault that compromise the quality of the result

- the machine was able to interpret well the ground and the wall with the windows, because he had "frequent" and precise references, while on the flat walls did not know how to behave, so it let them incomplete; also on the roof, which also was well textured very flat and sharp, the machine has been misled (perhaps because it has misinterpreted the light-and-dark" tiles)

- and finally also in this case the geometry is based on a dense network of small triangles that appear only a little less dense on the perfectly plane walls

B1-4 OTHER INTERESTING MODELS

In the Autodesck 123D website there are a lot of other intriguing models autogenerated from a lot of users.

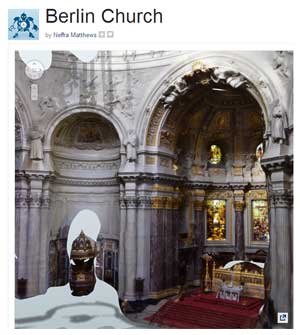

Some of these models are of particular interest to the central theme of the article to which this Appendix refers. I will limit myself to pointing out some, possibly leaving you the pleasure of making further discoveries. Here therefore I propose to you some images, clicking on which you can view the page in the corresponding model.

Berlin Church (22.0 MB) by Neffra Matthews |  Casa Pojnar Oradea Romania (9.9 MB) by Gabriel Popa |

Heptapyrgion (5.3 MB) by Kostas Chaidemenos |  Sagene Kirke (18.0 MB) by Ronald Kabicek |

B1-5 FINAL CONSIDERATIONS

The number and quality of the tests performed and the cases examined does not allow me to give an accurate overall assessment on the 3G123D-Catch Machine.

I should in fact perform other tests and experiments to understand and demonstrate, for example, whether and how it is possible for the user to improve the quality of results by providing more relevant photos - as a number of shots, their quality, the views used etc.

However, I can, at the moment, draw the following tentative conclusions:

- the machine proves to be able to operate even when the photographs do not completely describe the object to be represented, still generating a cloud of points in the right positions in the three-dimensional space and appropriately colored in accordance with the corresponding photographs

- the geometry created by the machine consists of a grid of triangles that covers all surfaces always keeping quite busy even when the surfaces to be represented are perfectly flat (this makes me think that it does not adopt specific strategies to optimize the complexity)

- the machine works very well with sharp, rich-textured photos, while failing to correctly interpret photographs with plane surfaces with flat colors

- more investigation is needed to understand why certain distortions at critical points, edges and corners, and to understand how any shortcomings (or characteristics) in the photographs (level of definition, objectives used, brightness, lights and shadows, focus) and the consistency of photographs (eg lights and shadows, moving objects) can affect the final resulting quality and complexity

- one thing we can say with some certainty: there are still good room for improvement (quality, complexity, control parameters) that will surely be exceeded.

In a nutshell: the examined machine looks very interesting, innovative, powerful and fun to use: you get the impression that it can magically reproduce various and very complex objects with a few well-taken photographs - but also that at present it is very difficult to obtain, with any degree of certainty "a priori", perfect and smudge results.

END OF APPENDIX B1

See you next episode...

.